Nobody knows how the whole system works

(surfingcomplexity.blog)

from codeinabox@programming.dev to programming@programming.dev on 02 Mar 2026 15:54

https://programming.dev/post/46579602

from codeinabox@programming.dev to programming@programming.dev on 02 Mar 2026 15:54

https://programming.dev/post/46579602

#programming

threaded - newest

True, but my compiler isn’t demanding a $200/month subscription from me.

They used to though

some still do. you’re spending the same amount if you still need Delphi in current year for instance

There is a fundamental difference this post is pretending doesn’t exist.

Trusting the abstractions of compilers and fundamental widely used libraries is not a problem because they are deterministic and battle tested.

LLMs do not add a layer of abstraction. They produce code at the existing layer of abstraction.

they’re also not equivalent because LLMs pretense is to remove the human from the equation, essentially saying that we don’t need that knowledge anymore. But people still do have that knowledge. Those telephone systems still work because someone knows how each part works. That will never be true for an LLM.

I’m more than confident that - if you actually went down to AT&T HQ and really dug into the weeds - you’d find blind spots in the network created by people leaving the company and failing to back-fill their expertise. Or people who incorrectly documented this or that, forcing their coworkers to rediscover the error the hard way.

I think we do underestimate how many systems are patched over, lost in the weeds, or fully reinvented (by accident or as a necessary replacement) because somebody in the chain of knowledge was never retained or properly replaced.

I would be very dubious of the theory that anyone at AT&T could recreate their system network from the 1980s, without relying on all the modernizations and digitized efficiencies, for instance. No way in hell they could reproduce the system from the 1940s, because all that old hardware (nevermind the personal) has been rendered obsolete ages ago. But I’m sure there are still lines in the ground that were laid decades ago that are still in use. Possibly lines that they’ve totally lost track of and simply know exist because the system hasn’t failed yet.

But that’s the strength of society to begin with, no one person knows how any given complex system works because its impossible for one person to know, we come together in specialised groups to create these systems over time with the collective knoweldge of many people.

Idk if I’d call it a “strength”. Feels more like a weakness.

But sure. This is the reason bureaucracies exist. Knowledge accrual, organization of specialties, long term investment planning, and distribution of surplus… as critical today as it was 8,000 years ago.

Agree that it weakens certain things, but I don’t see how we can overcome that. It’s great to have a knowledgeable GP as your doctor, but their breadth of knowledge causes them to fail at a deep knowledge of specific disease states. So he might be able to determine you have cancer, which then causes him to send you to an oncologist who specializes in that area.

Basically, there is a limit to the volume of information a human can hold. This was partially what AI advertised it could help overcome, but it’s so much worse than expected. If we could somehow increase the volume of information a human could hold and process, you’d be in much better shape for those doctor visits that end in “well, I guess this symptom is just you getting older” when really it’s SOMETHING but the doctor completely lacks the knowledge of that area.

I mean the alternative is to be like octopi, intelligent but forever stuck in the beginning of the stone age due to a lack of ability to aggregate and accumulate knoweldge over time.

Stupid cephalopods. Learn to breed without dying, idiots!

You would be largely correct. Though I was kind of amused with the “Does anyone know how their telephone works?” ca.1994 and then listed a bunch of things that I very much know. So maybe a bad example.

Well, like with the Netflix question, you can keep going deeper until you hit the unknown. At some point, the person asking the question doesn’t know the questions to ask to get to that next level, though.

Many of those questions aren’t particularly useful either. They may be interesting from a philosophical perspective but that’s the language of thinking, not the language of doing. I’ve written code in assembly language. I’ve looked at the output in binary. I can explain in basic terms what is happening there. Does that help anybody?

I would argue that it doesn’t because almost everyone writes code in higher level languages. Even if I can tell you what I know it doesn’t provide any practical information for you to use. Similarly, I could explain to you how long division works but the next time you need to divide two numbers you’re still going to reach for a calculator instead of a pencil and paper. What then is the point of lamenting the loss of knowledge that no one uses directly? It could be reconstructed from what remains if necessary but since it isn’t necessary it doesn’t matter.

Really depends on where the bug lives.

Most people write mediocre code. A lot of people right shit code. One reason why a particular application or function runs faster than another is due to the compilation of the high level language into assembly. Understanding how higher level languages translate down into lower level logic helps to reveal points in the code that are inefficient.

Just from a Big-O notation level, knowing when you’ve moved yourself from an O(n log n) to a O(n^2^) complexity is critical to writing efficiently. Knowing when you’re running into caching issues and butting up against processing limits informs how you delegate system resources. This doesn’t even have to go all the way to programming, either. A classic problem in old Excel and Notepad was excess text impacting whether you could even open the files properly. Understanding the underlying limits of your system is fundamental to using it properly.

Knowing how to do long division is useful in validating the results of a calculator. People mistype values all the time. And whether they take the result at face value or double-check their work hinges on their ability to intuit whether the result matches their expectations. When I thought I typed 4/5 into a calculator and get back 1.2, I know I made a mistake without having to know the true correct answer.

One of the cruelest tricks in the math exam playbook is to include mistyped solutions into the multiple choice options.

It’s not lamenting the loss of knowledge, but the inability to independently validate truth.

Without an underlying understanding of a system, what you have isn’t a technology but a religion.

It could be, as long as we have people with the required competencies.

But now we are seeing said competencies not being valued (it’s been happening before AI and I’d say that the current AI usage is a result of it as opposed to the cause), which would cause reduced development of it in future generations and then scarcity.

Also this interpretations is just wrong.

The benefits most certainly do not outweigh the “risks”, because they are in fact not at all risks, but an actively happening disaster of environmental, social and cognitive nature.

Also the entire argument of “when you go deep enough nobody actually fully understands anything” is stupid. The people that actually understand the deepest are the people that are creating all our cutting edge technology. Modern processors wouldnt exist without people trying to go as deep as possible.

Author should probably read “I, Pencil”

Compiling code to machine instructions is deterministic. That’s not the case with LLMs.

abstracting away determinism /s

I think this is the nut of building out a skilled development team. You need different people at different levels who know their area of expertise well and who are willing to admit where it ends, such that they can reach out to the next guy to step in and assist.

But also, you need the Full Code Stack as it were. Or, at least, you need a way to know where your blind spots are and understand the limit of your capacity. Otherwise, you run the risk of an “innovator” asking why they can’t just dump canola oil into their gas tank or how come you can’t just use hydrogen instead of helium for your balloon. And worse - plowing ahead because nobody outside of their cubicle stepped in to stop them.

You run the risk of destroying a lot of your own hard work - and possibly a lot of other people’s hard work - because you didn’t realize your own limits or know where to go to exceed them.

If only it was just a problem of understanding.

The thing is: Programming isn’t primarily about engineering. It’s primarily about communication.

That’s what allows developers to deliver working software without understanding how a compiler works: they can express ideas in terms of other ideas that already have a well-defined relationship to those technical components that they don’t understand.

That’s also why generative AI may end up setting us back a couple of decades. If you’re using AI to read and write the code, there is very little communicative intent making it through to the other developers. And it’s a self-reinforcing problem. There’s no use reading AI-generated code to try to understand the author’s mental model, because very little of their mental model makes it through to the code.

This is not a technical problem. This is a communication problem. And programmers in general don’t seem to be aware of that, which is why it’s going to metastasize so viciously.

As a senior developer, I use the new AIs. They’re absolutely amazing and a huge timesaver if you use them well. As with any powerful tool, it’s possible to over-use and under-use it, and not achieve those gains.

However, I disagree with the comparison to knowing how hardware works. There’s a pretty big difference between these 2 things:

Letting a company else design and maintain the hardware or a library and not understanding the internals yourself.

Letting a someone/something design and implement a core part of your code that you are responsible for maintaining, and not understanding how it works yourself.

I am not responsible for maintaining ReactJS or my Intel CPU. Not understanding it means there might be some performance lost.

I am responsible for the product my company produces. All of our code needs to be understood in-house. You can outsource creation of it, or have an LLM do it, but the company needs to understand it internally.

Roman builders were masters of concrete. That was how they built enormous structures like the Colosseum. They even had a special type of concrete for harbors, that would form a chemical reaction with sea water, and create an underwater concrete so hard that it’s still in use.

Then Rome slowly collapsed, and the secrets of concrete were lost, and weren’t rediscovered until centuries later. Scientists just figured out the thing with the sea water concrete a few years ago, but they still don’t know the formula.

History is riddled with lost and rediscovered stuff.

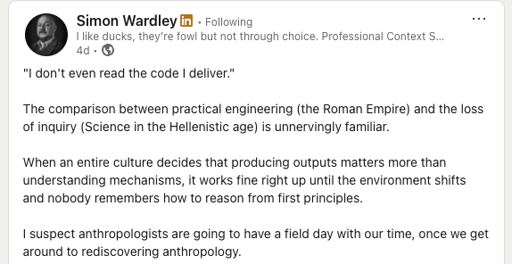

Yes but Simon fucking Wardley (whoever the fuck he is) does not regard them highly.

But someone knows fully how some part works.